Verifying artificial intelligence-generated images: Socio-technical approaches to authenticity

by Michael Mncedisi Willie

Computing and Artificial Intelligence, Vol.3, No.4, 2025;

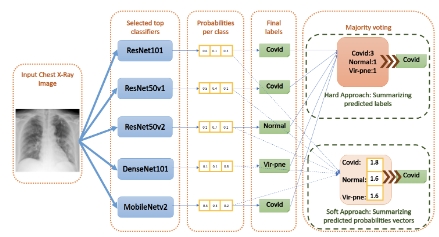

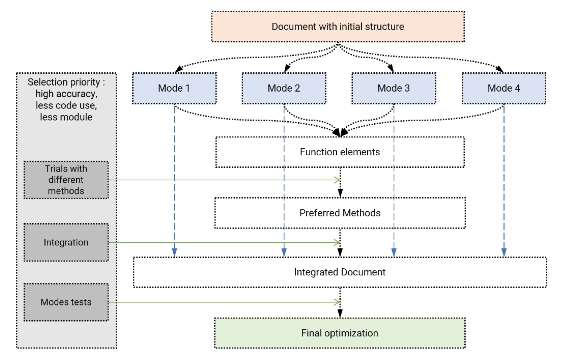

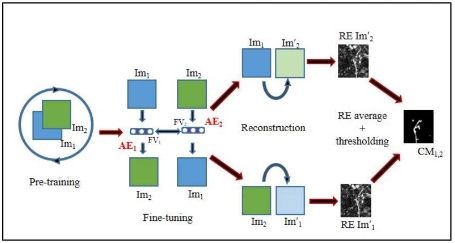

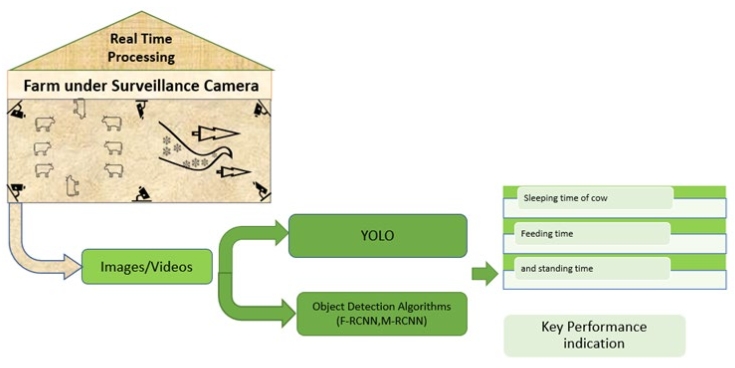

The rapid proliferation of artificial intelligence (AI) has transformed visual media, enabled highly realistic AI-generated images, and raised ethical, social, and security concerns. Generative artificial intelligence (Generative AI) architectures, including Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), and diffusion models, allow content creation that is increasingly indistinguishable from human-made visuals, facilitating creativity, education, and communication. However, these capabilities also introduce risks of manipulation, identity fraud, misinformation, and deepfake attacks across social, political, corporate, academic, and humanitarian domains. This study investigates AI image verification as a socio-technical response to synthetic visuals, focusing on social media, artistic, and forensic contexts. It employed a qualitative design combining thematic literature review and case study analysis. Thematic analysis identified patterns in verification approaches, including pixel-level analysis, metadata forensics, machine learning classifiers, watermarking, and blockchain-enabled methods. Case studies explored real-world applications, highlighting perceptual biases, strategic use of synthetic content, and governance and digital literacy challenges. Findings reveal that human perception alone is insufficient for reliably discerning authenticity, with individuals frequently misclassifying AI-generated images as real. Integrating machine learning, metadata analysis, and blockchain verification, hybrid technical approaches significantly improve detection accuracy. Socio-technical factors, including platform policies, ethical norms, organisational governance, and user literacy, shape the effectiveness of verification methods. The study presents a conceptual framework linking technological, organisational, and societal dimensions, emphasising the need for coordinated strategies that combine algorithmic innovation, regulatory oversight, and public engagement. Practical implications include deploying hybrid verification systems, strengthening governance and ethical standards, enhancing digital literacy, and fostering cross-disciplinary collaboration to safeguard trust, authenticity, and integrity in digital media.

show more

Open Access

Open Access