Predicting manipulated regions in deepfake videos using convolutional vision transformers

by Mohan Bhandari, Sushant Shrestha, Utsab Karki, Santosh Adhikari, Rajan Gaihre

Computing and Artificial Intelligence, Vol.2, No.2, 2024;

195 Views,

34 PDF Downloads

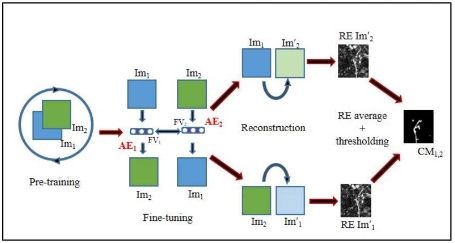

Deepfake technology, which uses artificial intelligence to create and manipulate realistic synthetic media, poses a serious threat to the trustworthiness and integrity of digital content. Deepfakes can be used to generate, swap, or modify faces in videos, altering the appearance, identity, or expression of individuals. This study presents an approach for deepfake detection, based on a convolutional vision transformer (CViT), a hybrid model that combines convolutional neural networks (CNNs) and vision transformers (ViTs). The proposed study uses a 20-layer CNN to extract learnable features from face images, and a ViT to classify them into real or fake categories. The study also employs MTCNN, a multi-task cascaded network, to detect and align faces in videos, improving the accuracy and efficiency of the face extraction process. The method is assessed using the FaceForensics++ dataset, which comprises 15,800 images sourced from 1600 videos. With an 80:10:10 split ratio, the experimental results show that the proposed method achieves an accuracy of 92.5% and an AUC of 0.91. We use Gradient-Weighted Class Activation Mapping (Grad-CAM) visualization that highlights distinctive image regions used for making a decision. The proposed method demonstrates a high capability of detecting and distinguishing between genuine and manipulated videos, contributing to the enhancement of media authenticity and security.

show more

Open Access

Open Access